I was today days old when I learned that a non-trivial fraction of the AI inference happening on the internet right now is running on idle gaming PCs in random houses. Not as a thought experiment — as the actual production setup of some of the most-visited AI sites in the world. The marketplace that makes this work is called Salad.

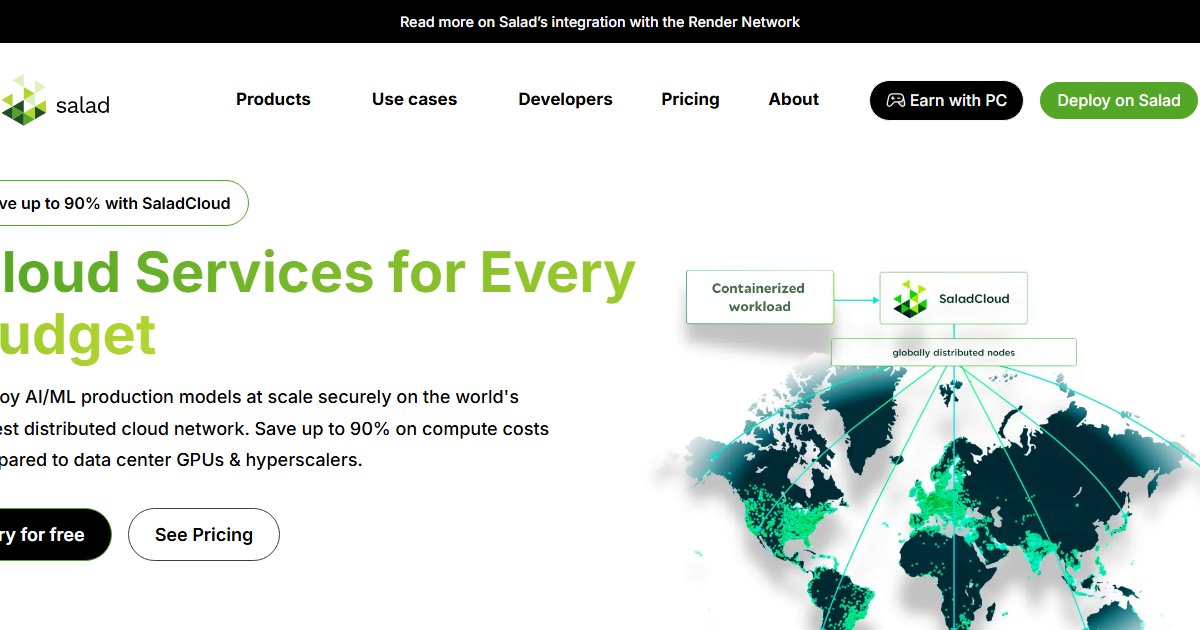

The pitch is simple: 60,000+ consumer GPUs (RTX 3060/3070/3080/3090/4070/4080-class) sitting idle in home offices and gaming rigs around the world, available for rent on a pay-per-minute basis starting at $0.02 per hour. AI startups bring workloads. Gamers bring idle silicon. Salad orchestrates the matchmaking and takes a cut. Both sides actually benefit — that's rare.

The buy side — AI startups and inference workloads:

The economics are striking. $0.02 per hour for a consumer GPU is absurdly cheap compared to big-box clouds. Salad claims roughly 10x more AI inferences per dollar versus AWS, Azure, or GCP. That's not a marginal improvement — that's transformative for the margin-constrained ML businesses that are actually running this stuff in production.

Who's using it? Salad publishes case studies showing customers in the top 50 most-visited AI websites. One customer runs 10 million images per day on 600+ consumer GPUs. Another trains 15,000+ LoRAs per month. These aren't experiments — this is load-bearing inference infrastructure.

The platform handles the security layer. Salad is SOC2 certified and has patented workload isolation so the buy side doesn't have to trust that a stranger's gaming PC isn't snooping on their proprietary model or data. That isolation is real enough that people are comfortable putting production inference there.

Best-case workloads: image generation, text-to-speech, speech-to-text, computer vision, small-model training, LoRA fine-tuning, batch processing. Worst-case: anything requiring a 70B+ model, multi-GPU H100-class training, anything that needs NVLink between cards. Know the constraints, match the workload.

The sell side — gamers monetizing idle GPUs:

Salad's desktop app turns your gaming PC's idle GPU into rentable compute. Install it, run it when you're not gaming, earn. The mechanics are straightforward: you're not picking workloads or optimizing for anything — the platform schedules whatever's in the queue. Inference, training, rendering, occasionally crypto-adjacent stuff.

The earnings are real but modest — that's the honest part worth saying. A RTX 3080 or 4070 in a low-electricity-cost region might earn $10–40 per month if you leave it on most of the time. A 4090 in a cheap-power area could hit $100+. But in most of the developed world with $0.10-0.15/kWh electricity, the GPU heat cycles add wear-and-tear that eats into earnings. You're not getting rich. You're getting beer money to small side income, depending on your GPU and your uptime.

The tradeoff you take: electricity cost eats the margin, GPU heat and wear shorten lifespan, you trust Salad's isolation that the workloads can't see your files. That said — the alternative for most people is "my GPU sits idle 18 hours a day." Even modest earnings beat zero.

Why the marketplace actually works:

Consumer GPUs are genuinely capable for most AI workloads. A 3060 isn't an H100, but for serving a Stable Diffusion endpoint or a Whisper transcription pipeline, it's plenty. Big-box clouds charge multiples of what the underlying compute actually costs — there's a market gap. Gamers have idle GPUs they already paid for. There's a supply gap. Salad sits in the middle and takes a cut. Classic marketplace dynamics, just with GPUs instead of apartments or cars.

The economics work because both sides genuinely benefit. The buyer saves 10x on inference. The seller makes something on idle capacity. The platform takes enough to stay operational. It's not a zero-sum trick — it's a different cost structure that serves a different market than the hyperscalers do.

The honest caveats:

Sellers: it's beer money, not a salary. Don't quit your job. Earnings vary wildly by GPU class, electricity cost, and geography. Set realistic expectations. Buyers: consumer-GPU constraints are real. Don't try to serve a 70B model on this. Match workload to platform. Both: you're trusting a third party with your compute or your workload. Salad is SOC2 and well-established, but it's still a layer of trust. Privacy-sensitive workloads (HIPAA-regulated data, customer PII) won't make sense here regardless of certification.

What you can do right now:

Head to salad.com if you're an AI startup looking to cut inference costs. The platform's pricing calculator and case studies give you the real picture of what $0.02/hour gets you and what workloads have been successful. For gamers, salad.io has the desktop app and earnings estimator. Download it, run it in the background, see what your GPU actually earns. It takes five minutes to find out if it's worth your electricity cost.

The close:

There's something quietly cool about millions of consumer GPUs — bought for Fortnite and Cyberpunk — quietly running half the internet's image generation when their owners are asleep. The marketplace works because both sides genuinely benefit. Pay pennies, earn pennies, build a side of the AI economy almost nobody thinks about.